Resilient business operations (BizOps) and applications are key to running businesses and ensuring the right insights are available at the right time to the right stakeholders.

In our first article (XOps: The right approach for production business applications) in this series, we introduced XOps as the future for setting up and running production business applications at scale. Tredence’s XOps offering provides a combination of cross-skilled people, strong partnerships and the right accelerators for each point in the production business process value chain.

In the second article in the XOps series, we focus our attention on BizOps process and services. BizOps is the core of the XOps process. All decisions and key insights come from business applications, and ensuring the stability, resilience and predictability of these applications forms the crux of BizOps. Organizations could have cutting-edge DevOps processes, a state-of-the-art data pipeline and ML model monitoring in place, all running on an optimized cloud server, but if the business process is not designed to scale the right way, it will all be for naught.

What problem are we solving?

For the purposes of this article (and subsequent ones), we found it pragmatic to pick one use case for illustration. We will focus here on the classic trade promotion effectiveness problem, which is crucial for consumer packaged goods (CPG) companies’ day-to-day operations.

Trade promotion processes and programs are pivotal to boosting sales, building brand equity with end consumers & shoppers and strengthening channel partnerships with online and offline retailers. The average CPG company allocates ~14% of its total revenue to trade promotion activities, which underlines these programs’ importance.

All decisions and key insights come from business applications, and ensuring the stability, resilience and predictability of these applications forms the crux of BizOps.

However, despite growing trade promotion budgets, many CPG business processes involve rudimentary trade spending practices, without identifying ways to optimize these budgets. To determine if a trade promotion process was effective or not, identifying the baseline is critical. It must be done at the same level at which sales & promotions teams design and implement these initiatives for maximum efficiency. It creates significant computational complexities amidst tens of thousands of ML models which need to be built across product groups, retailers and other channels.

The trade promotion process should be able to explain, at a high level of granularity, which promotional tactics and discount levels worked well in the past, and which Promoted Product Groups (PPGs) will yield a higher ROI (Return on Investment) compared to previous initiatives. It should also allow simulation of the incremental impact of a promotion, and interface with the in-field trade promotion management system to validate and activate promotions.

The process is ready, but can it scale?

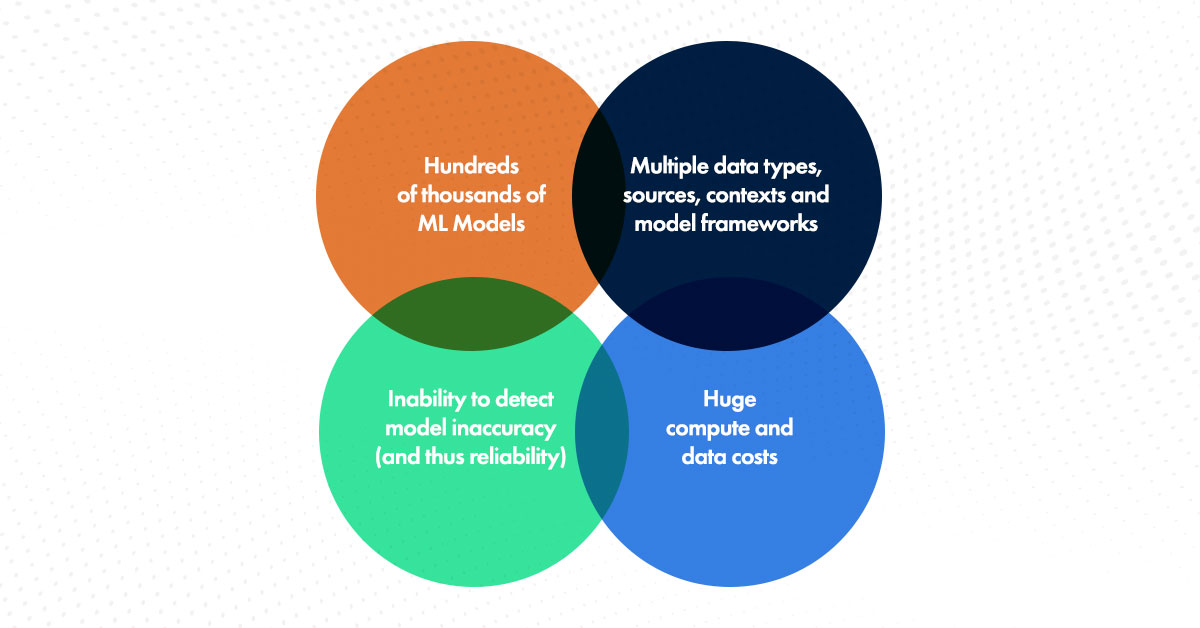

The business case for a trade promotion solution is clear, but the actual value can only be derived if it is successfully built. Only 10% of CPG companies deploy trade promotion analytics at scale. Surely if the process is so important, there should be higher adoption in production. Why is it then not happening? The answer lies in the ability to scale the trade promotion process. Multiple challenges affect the ability to deploy and scale a trade promotion solution.

With each rerun as new data comes in the incremental data load increases, hence increasing the intersections while generating features and training models. Trade Promotion Effectiveness (TPE) models are generally time-series-based models that can forecast sales. Thus, sales figures should be accurate enough to measure the impact of promotion and help the businesses make strategic decisions on their promotion spend. Data practitioners need to experiment with different time-series models for each stock-keeping unit (SKU) to obtain accurate forecasts and determine the best model to deploy in production.

Why BizOps?

With all this complexity, it is a nightmare to ensure all the processes are running smoothly. Multiple data streams from different sources, data quality checks, ML model integrations and ingestion of the model results into downstream TPE dashboards for consumption by sales managers – all these need to run smoothly all the time to ensure continued value realization. The moment sometimes breaks – be it a data pipeline failing to run, or a drop in quality of incoming data from one of the many sources, or an ML model drifting, the dashboard being used to make decisions ceases to be useful. Until it is fixed and made ready for usage again, it is useless. The more such issues occur, the more downtime the dashboard suffers from. Downtime is inversely proportional to the trust end users place in the dashboard. Eventually, they will try to do trade promotions on their own sans the tool, resulting in a lot of disruptions.

BizOps services can ensure that the end-to-end process stays resilient and stable. This begins at the time of the application design itself – the process (in this case TPE) needs to be designed keeping in mind future growth in models and incoming data volumes. This requires deep knowledge of the business cases themselves, and strong industry expertise, to be able to set up the process for scale.

A holistic view of all applications running on the local or cloud server is also required, to ensure these applications do not compete for resources with other production applications. Often the cost is the only consideration when designing cloud systems, but a broader view involving the application usage timings, SLAs for support and spread of business users across time zones and regions needs to be considered to ensure the process is optimized the right way. Finally, both the interim processes in the TPE workflow as well as the final dashboard usage should be diligently tracked to both preempt possible issues as well as validate adoption by end-users.

The moment sometimes breaks – be it a data pipeline failing to run, or a drop in quality of incoming data from one of the many sources, or an ML model drifting, the dashboard being used to make decisions ceases to be useful.

Close interaction with the end business users will also help in ensuring false metrics are not chased and confirming the value and validity of the business process. Once the business process is in production, close tracking will thus ensure it stays relevant.

Of course, the data, ML models and code will continue to change and evolve. And this means the production applications need to be continuously updated to stay relevant. As more applications are developed and the number of adopters increases, the security of the process also becomes important. This is the realm of DevSecOps, which we will cover in our next article.

In conclusion

As a business leader, one must understand the importance of having robust BizOps services and processes in place to support the smooth functioning of their business. Tredence’s industry and practice experts help organizations set up vertical and functional-specific business processes the right way – through a rigorous design and a scaled mindset – by bringing in decades of use-case expertise coupled with technical smartness.

If you want to understand how your business-critical process can be set up and maintained the right way, check out www.tredence.com or write to us at marketing@tredence.com to discuss further.

Topic Tags

Next Topic

How a revamped data analytics approach can mitigate healthcare disparities

Next Topic